Anthropic’s Sudden Account Bans Leave 60 Users in the Lurch

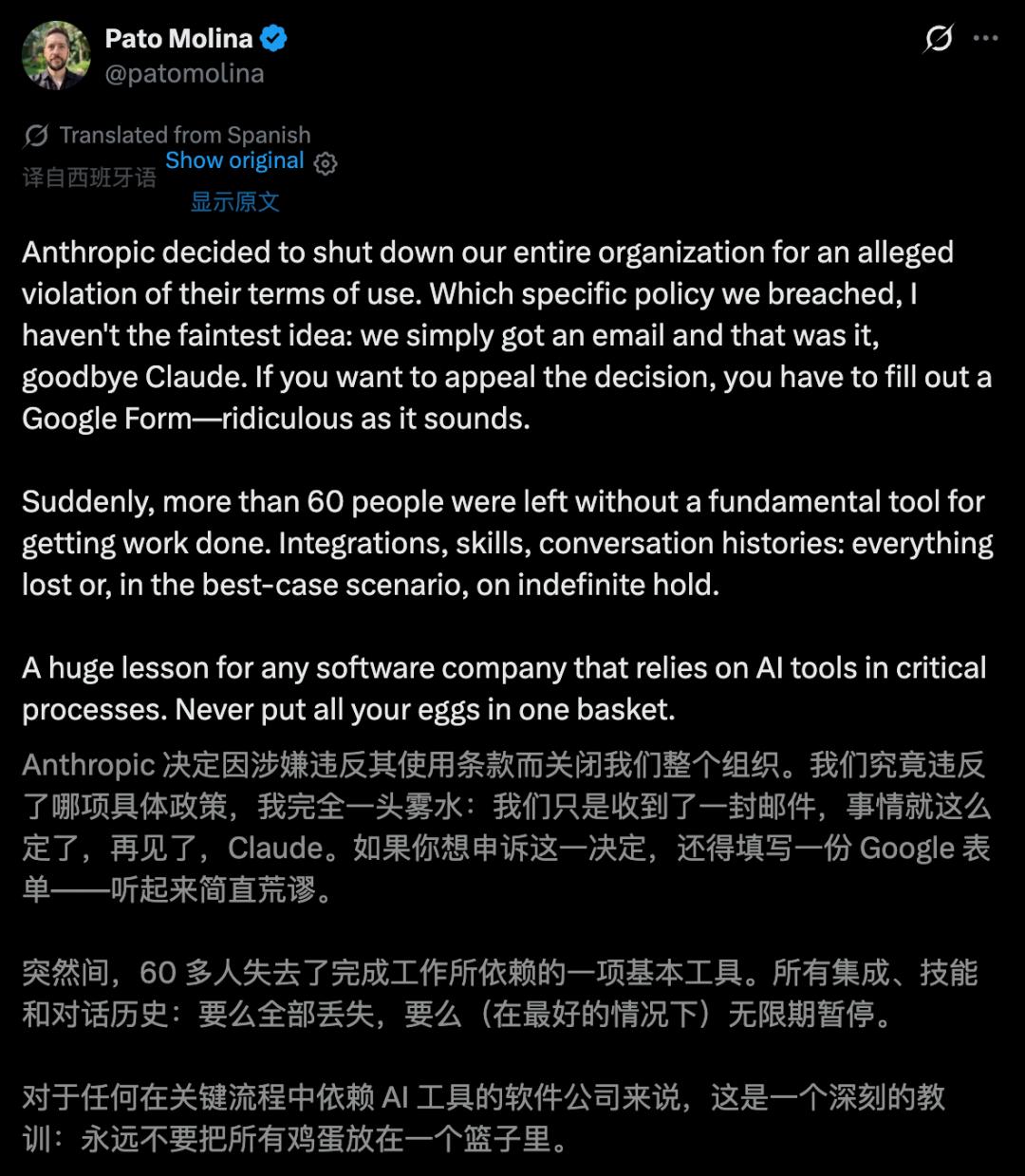

A fintech company serving millions in Latin America, Belo, faced a crisis when over 60 of its employees found their Claude accounts banned overnight. The CTO discovered the issue while preparing to use Claude for work, only to receive a cold email stating, “Your account has been suspended due to detected violations of usage policies.”

To appeal the ban, the team was directed to fill out a Google form, leaving them without access to essential tools for coding, analysis, and daily operations.

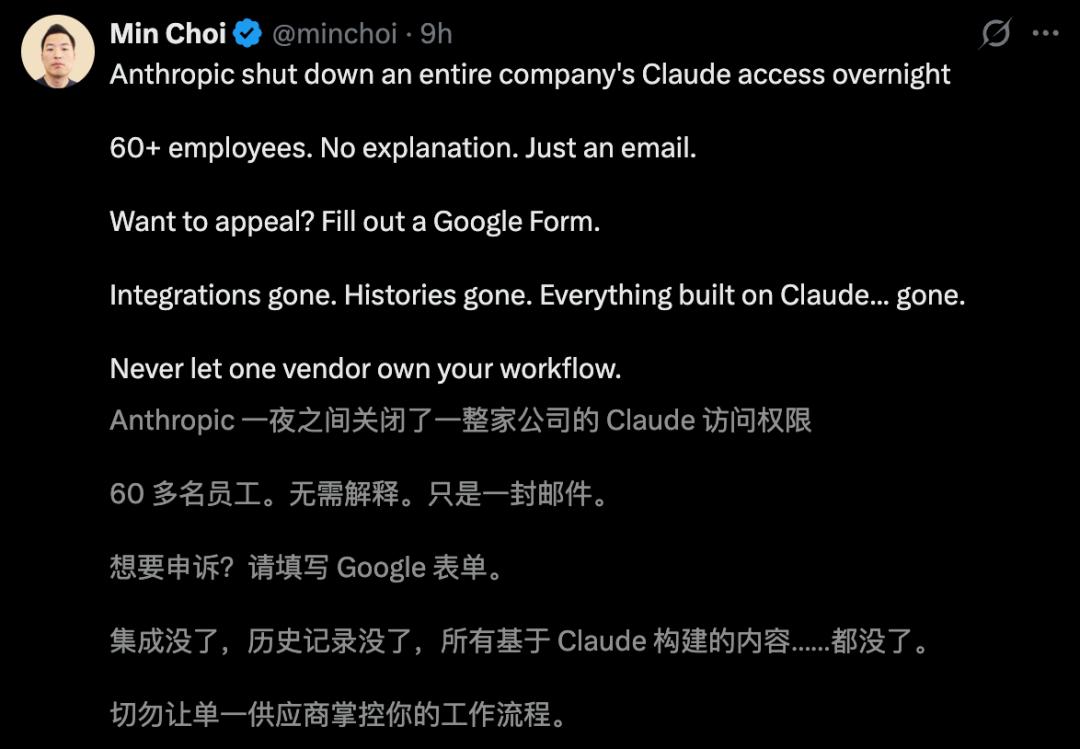

The news quickly went viral, prompting warnings from users: businesses should not bet everything on a single AI provider.

A Wake-Up Call for Belo

Belo’s team relied heavily on Claude for various tasks, including code reviews and customer service. The sudden ban disrupted their entire workflow, leading to significant financial losses.

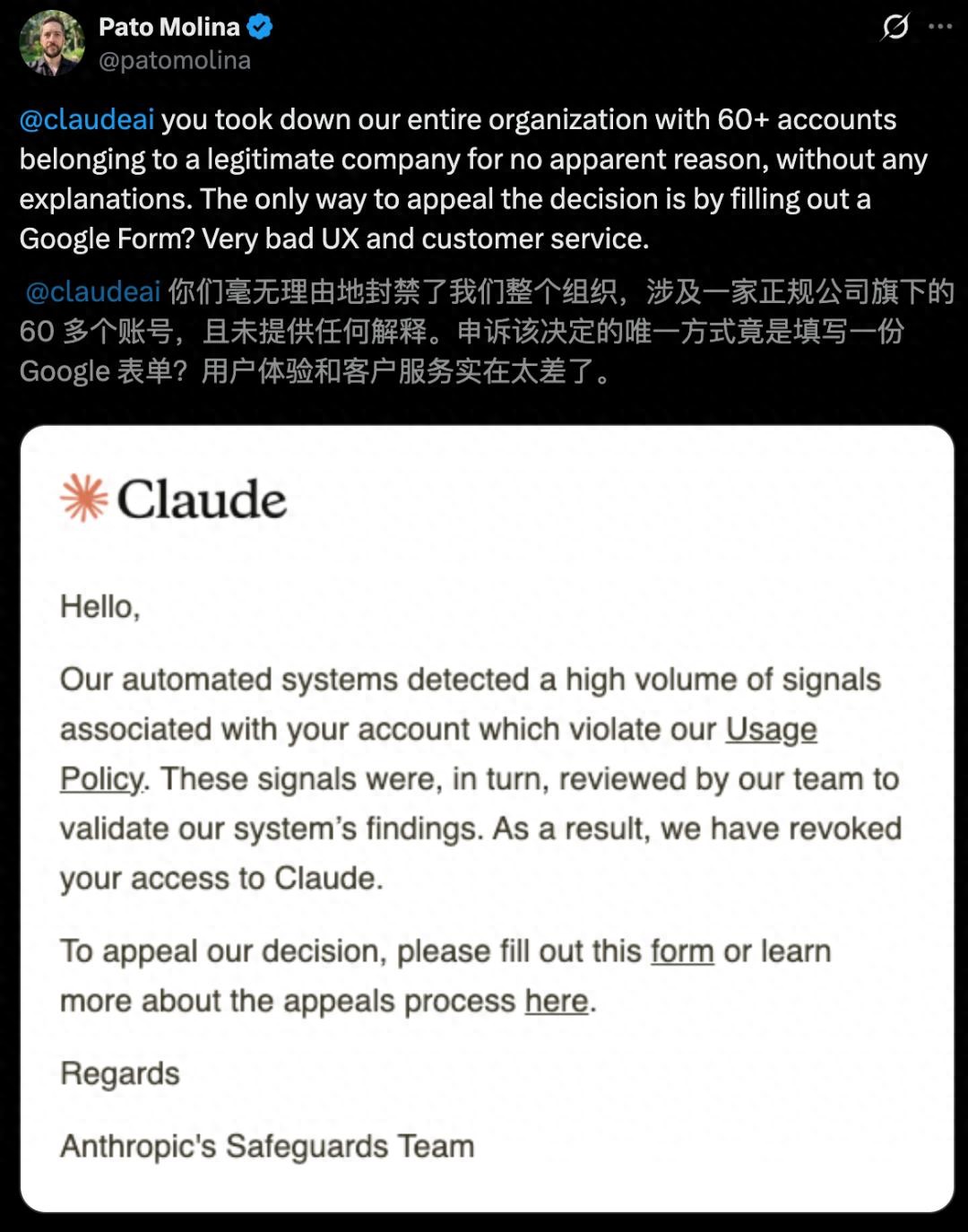

CTO Pato Molina expressed frustration on social media, questioning the justification for the mass ban and the lack of communication from Anthropic.

As the team awaited a response, they were fortunate to have deployed Gemini, allowing them to continue operations despite the setback.

Delayed Response from Anthropic

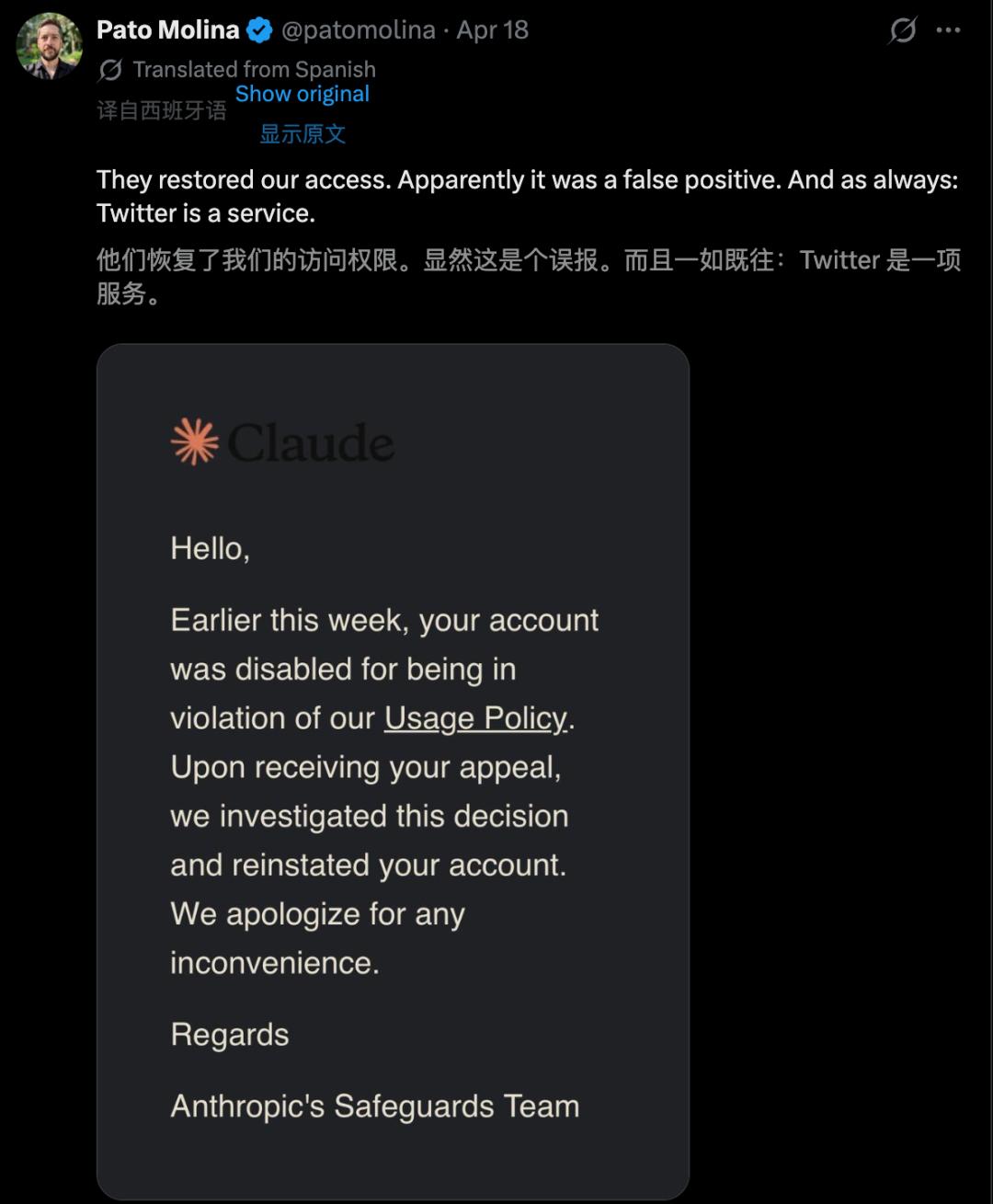

Anthropic’s eventual response was terse, stating that the accounts were banned for policy violations but offered no details on what policies were breached or why the mass ban occurred. This lack of transparency left many in the developer community concerned about their own accounts.

The incident served as a stark reminder for companies that heavily rely on AI tools: do not put all your eggs in one basket. The importance of maintaining business continuity through multiple models was underscored by Belo’s experience.

While Gemini provided a temporary solution, the transition was costly, as all previous context and integrations were lost.

Recurring Issues with Anthropic

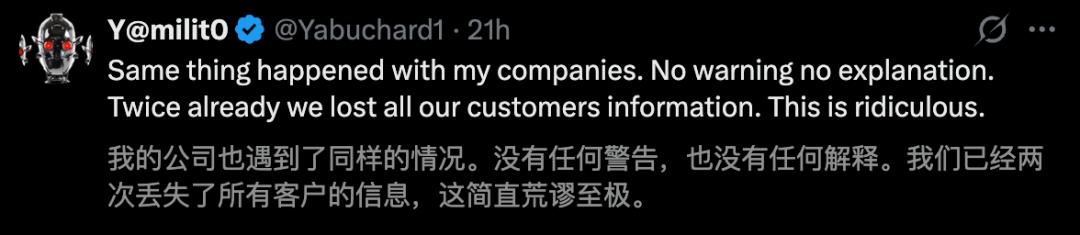

Belo’s experience is not isolated. Just a week prior, OpenClaw’s creator reported a similar account ban due to “suspicious activity.” After a brief recovery, the issue highlighted ongoing concerns about Anthropic’s account management practices.

Earlier this year, Anthropic tightened security measures, leading to accidental bans of users relying on third-party integrations. Reports of users being mistakenly classified as minors and banned have also surfaced, raising questions about the reliability of AI systems.

The Risks of Sole Dependency on AI

Pato Molina’s experience emphasizes a crucial lesson: do not gamble your business’s future on a single AI provider. While Belo’s team eventually regained access to their accounts, the incident left lingering concerns about operational stability.

If your entire workflow depends on Claude, consider this: if Claude were to disappear tomorrow, could your company still function? If the answer is no, then you are not merely using an AI tool; you are gambling with your business’s survival.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.