Anthropic’s Sudden Ban

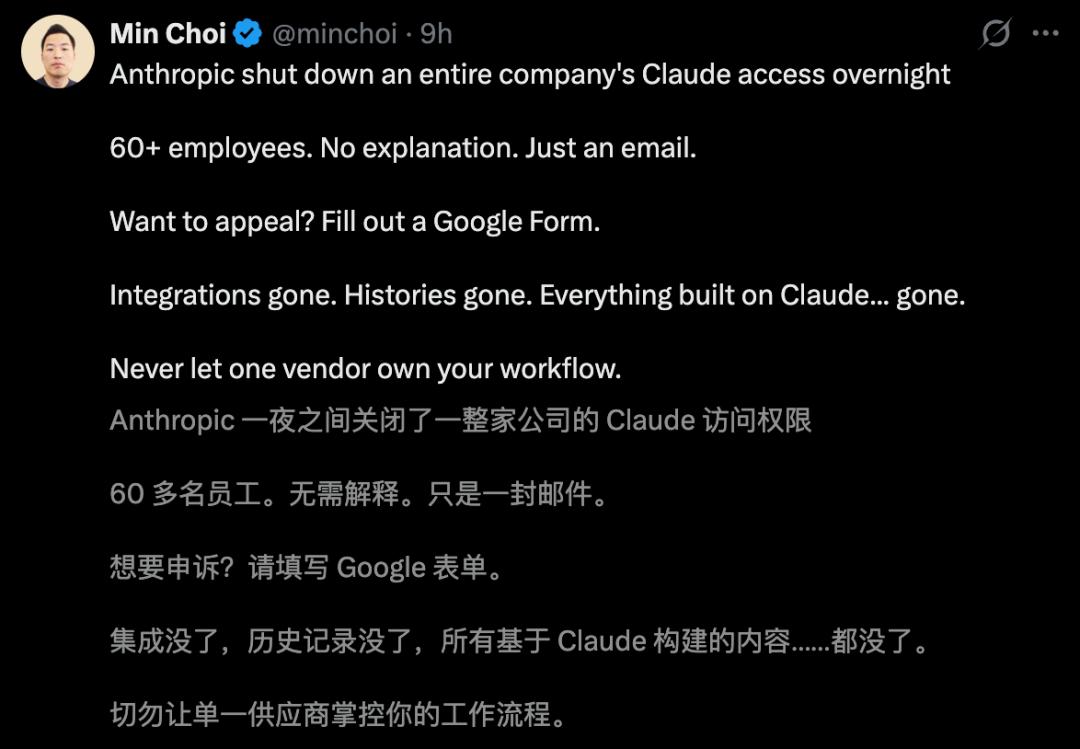

Over 60 Claude accounts were abruptly disabled overnight without warning, leaving an entire company paralyzed. This incident has sparked outrage and highlighted the risks of relying solely on one AI provider.

The Incident

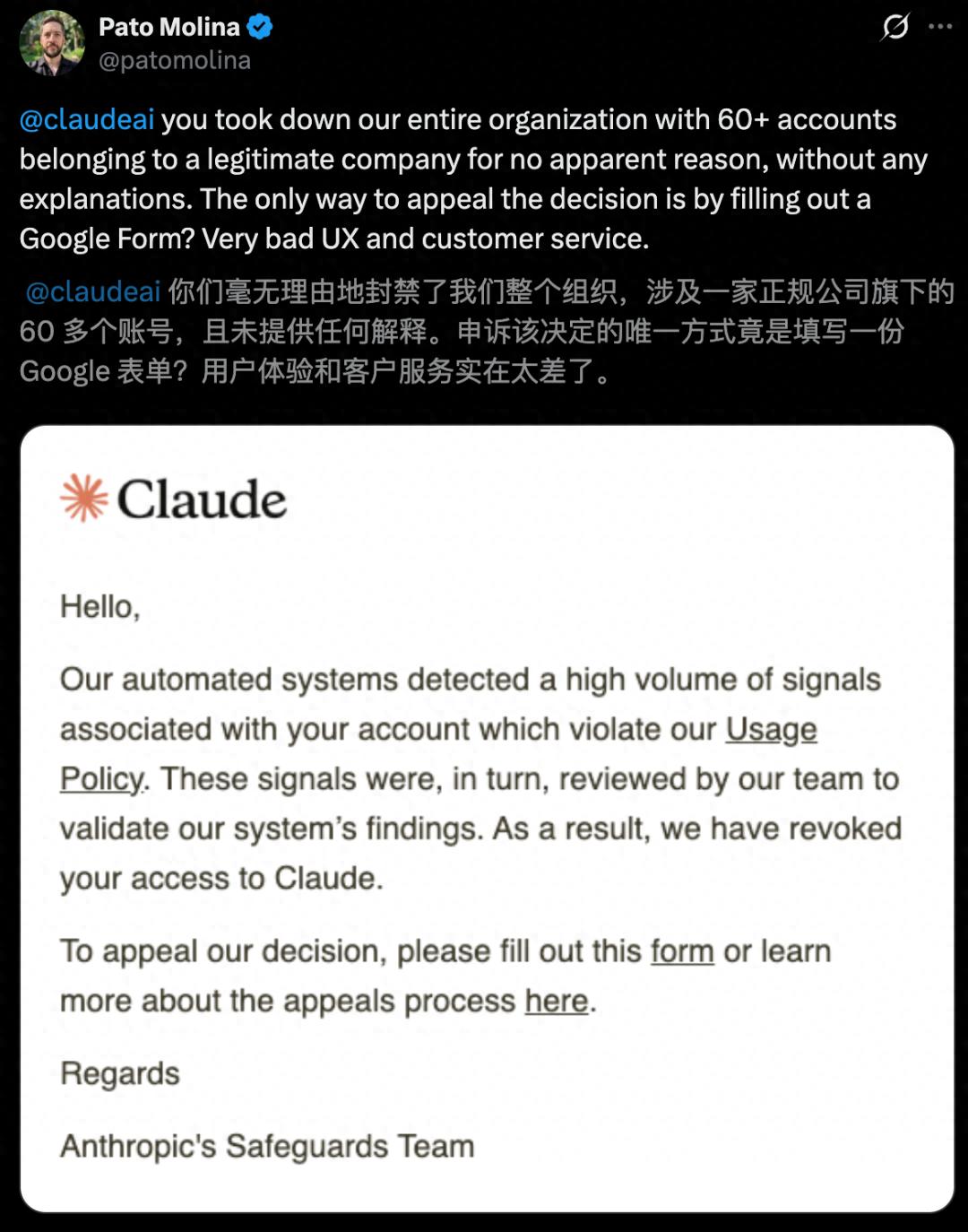

A fintech company, Belo, which serves millions in the Latin American market, faced a shocking disruption when their CTO discovered that all 60 Claude accounts were suspended. The only communication received was a cold email stating:

“Detected automated signals violating usage policies; your account has been suspended.”

To appeal, they were directed to fill out a Google form, leaving the team in disarray as they relied heavily on Claude for coding, analysis, and customer service.

Community Reaction

The news quickly went viral, with many advising companies not to put all their bets on a single AI vendor. The CTO, Pato Molina, expressed his frustration on social media, questioning the lack of explanation for the mass suspension.

Recovery Efforts

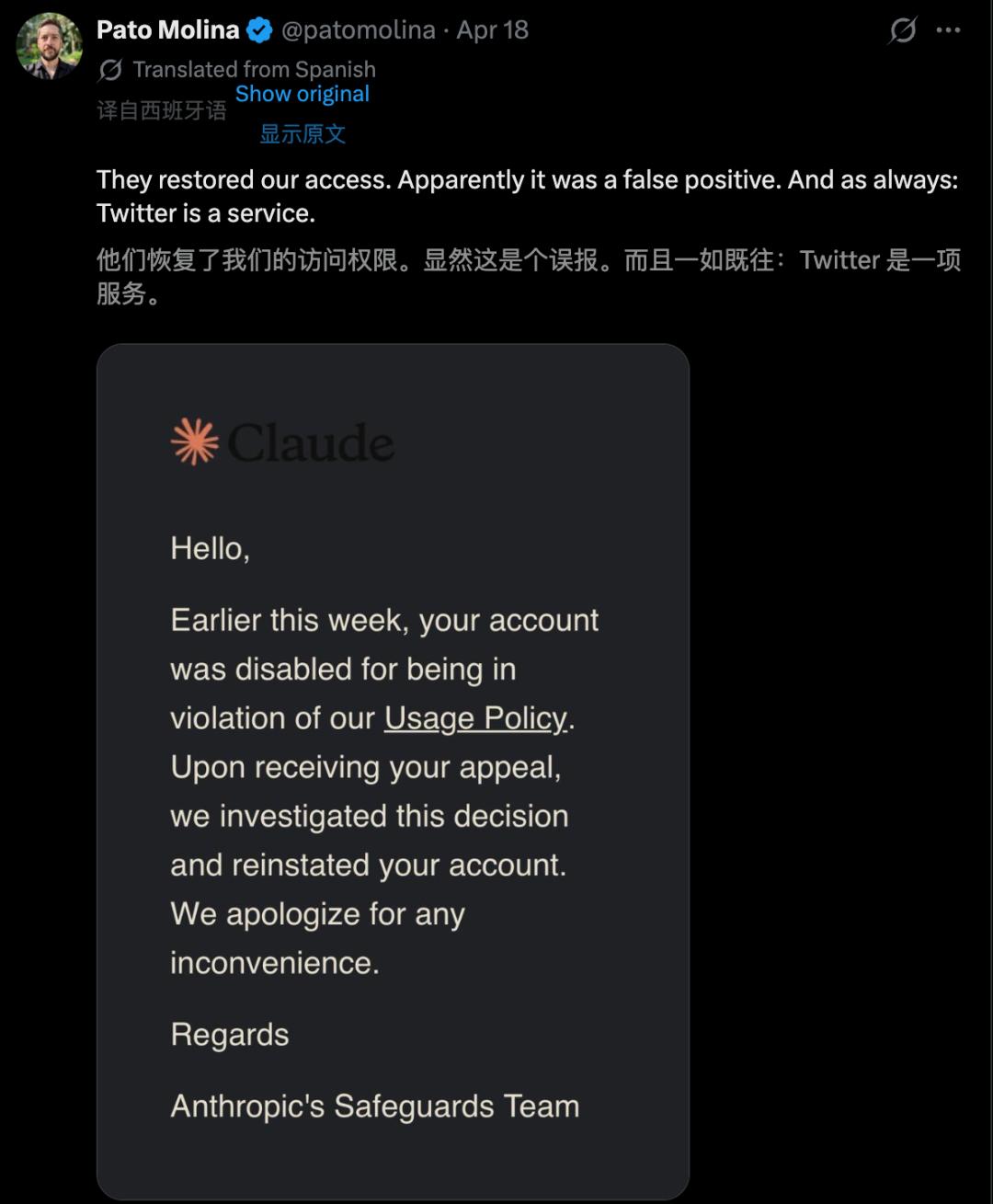

After realizing the accounts were disabled, the Belo team immediately submitted an appeal. The waiting period was agonizing, as every minute of halted workflow was costly. Fortunately, they had previously deployed Gemini, allowing them to continue operations without complete paralysis.

Anthropic’s Response

Anthropic’s response was delayed and lacked detail, simply stating that the accounts were suspended for policy violations. The email did not clarify which policies were violated or why the accounts were suspended simultaneously.

Lessons Learned

This incident serves as a stark reminder for companies heavily reliant on AI: do not place all your operational eggs in one basket. While multi-model strategies can be complex, they are crucial for business continuity. The sudden shutdown of Claude left Belo scrambling, and the transition to Gemini, while effective, came with significant costs in terms of lost context and workflow disruption.

Ongoing Concerns

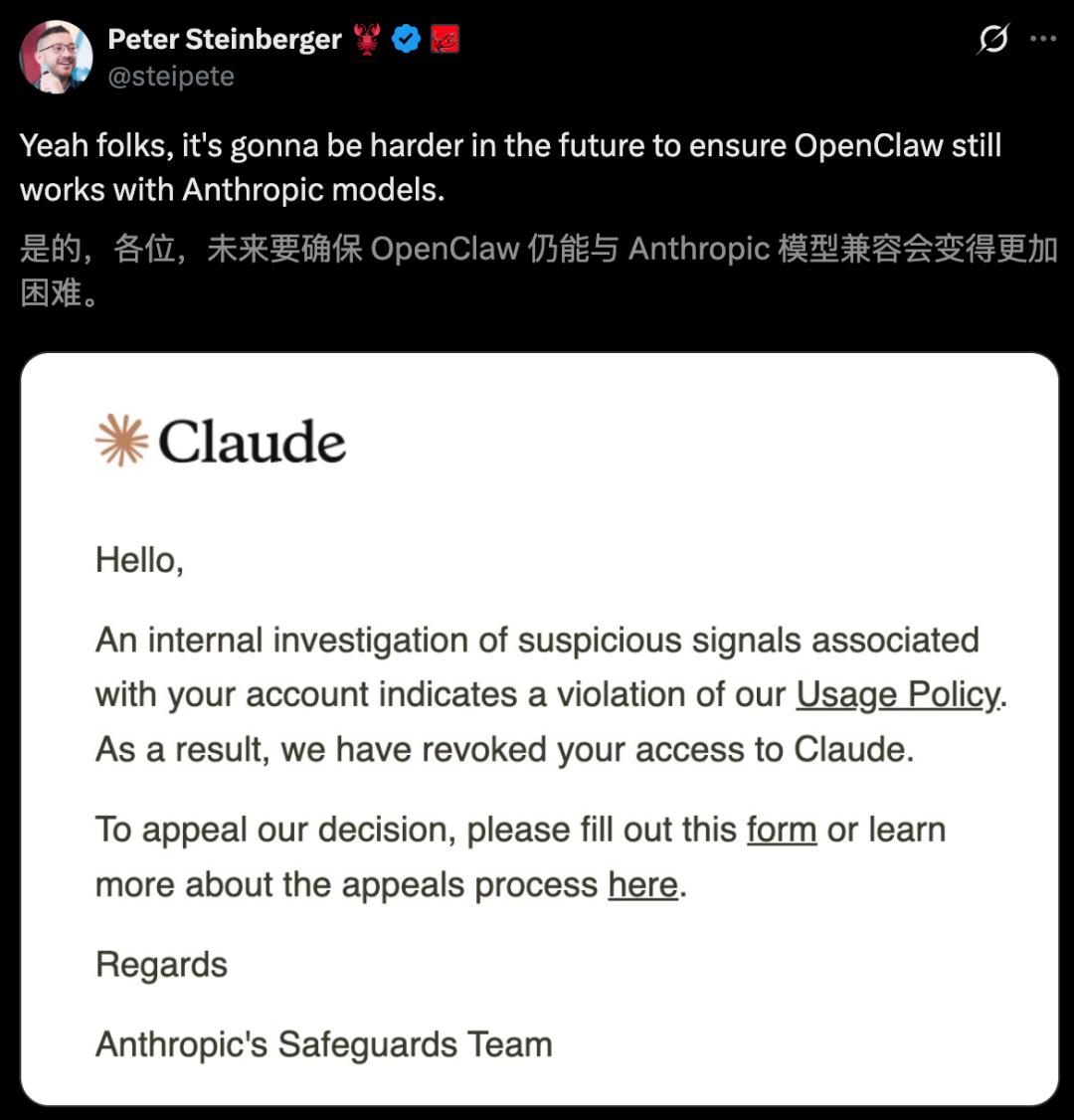

The Belo incident is not isolated. Just a week prior, another user reported their Claude account was suspended for suspicious activity, raising further concerns about the reliability of Anthropic’s systems.

Conclusion

Pato Molina’s experience underscores a critical survival lesson in the AI landscape of 2026: if your entire workflow is dependent on Claude, you must ask yourself: can your company function if Claude disappears tomorrow? If the answer is no, you are not just using an AI tool; you are gambling with your business’s future.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.